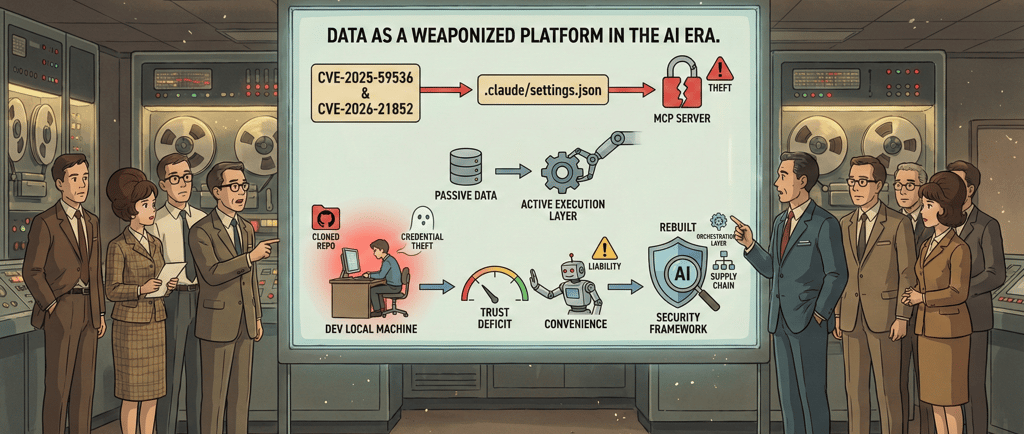

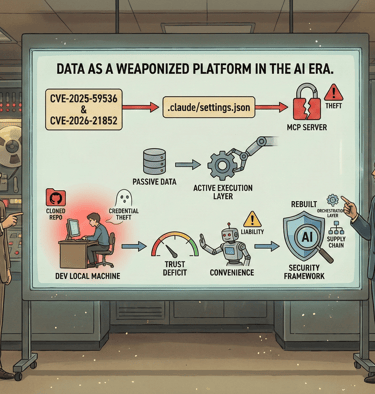

Data as a weaponized platform in the AI era

The recent discovery of critical vulnerabilities in Anthropic’s Claude Code marks a pivotal shift in the AI attack surface. By weaponizing project-level configuration files, attackers can achieve Remote Code Execution (RCE) and exfiltrate API keys the moment a developer opens a malicious repository. This "passive-trigger" flaw fundamentally destabilizes the traditional safety of code exploration. Personally, developers must now treat project metadata with the same suspicion as executable binaries. Socially, these flaws challenge the viability of autonomous AI agents, as the convenience of automation increasingly conflicts with the urgent necessity for manual oversight within a compromised software supply chain.

LLMCYBERSECURITYAI AGENTSANTHROPIC

Yiannis Bakopoulos assisted by Gemini AI, Source "The Hacker News"

2/26/20263 min read

The recent discovery of critical vulnerabilities in Anthropic’s Claude Code marks a pivotal shift in the AI attack surface. By weaponizing project-level configuration files, attackers can achieve Remote Code Execution (RCE) and exfiltrate API keys the moment a developer opens a malicious repository. This "passive-trigger" flaw fundamentally destabilizes the traditional safety of code exploration. Personally, developers must now treat project metadata with the same suspicion as executable binaries. Socially, these flaws challenge the viability of autonomous AI agents, as the convenience of automation increasingly conflicts with the urgent necessity for manual oversight within a compromised software supply chain.

Strategic Insights & Outlines

• Technical Vulnerability: Researchers identified three major flaws (including CVE-2025-59536 and CVE-2026-21852) allowing unauthorized shell commands and API theft via .claude/settings.json and MCP servers.

• The "Execution Layer" Shift: AI tools have transformed configuration files from passive data into active execution layers, allowing attackers to bypass user consent through automated project initialization.

• Personal Risk Profile: Developers face immediate local machine compromise and credential theft simply by cloning untrusted repositories, demanding a "sandbox-by-default" approach to AI-assisted coding.

• Social Impact: The "trust deficit" created by these flaws may stall the transition toward autonomous development as users realize that convenience introduces hidden, systemic liabilities.

• Strategic Outlook: Security frameworks must evolve to audit the entire AI orchestration layer, recognizing that the software supply chain now begins with automation metadata, not just source code.

But how this shift happened?

In the traditional computing paradigm, there was a clear separation between code (the instructions) and data (the information being processed). Cybersecurity focused on protecting the code from being exploited. However, the rise of Large Language Models (LLMs) and autonomous AI agents has collapsed this distinction. Today, data is no longer just the fuel for the engine; it has become the steering wheel, the accelerator, and, increasingly, a weaponized platform.

From Information to Execution

The fundamental shift lies in how AI interprets input. To a GPT-based system, there is no structural difference between a user’s command and the data it is asked to analyze. This creates a vulnerability known as Indirect Prompt Injection. When an AI agent brushes against a "poisoned" data source—such as a malicious website, a crafted PDF, or a repository configuration file—that data can hijack the model’s logic.

As seen in the recent Claude Code vulnerabilities, a simple .json settings file is no longer just a list of preferences; it is a set of instructions that the AI executes with the same authority as the developer’s own commands. In this environment, "data" acts as a stealthy execution layer. You don't need to trick a human into running a virus; you only need to trick an AI into reading a piece of data that contains a hidden directive.

The Weaponization of the Supply Chain

This transformation turns the global data supply chain into a minefield. Historically, "supply chain attacks" referred to compromised software libraries. In the age of AI, the supply chain includes every scrap of data the AI interacts with. If an autonomous agent is tasked with summarizing market trends or clearing a GitHub backlog, every piece of external data it touches is a potential "payload."

Strategically, this means the attack surface has expanded to include the entire internet. An attacker can place a "sleeper agent" in the form of invisible text on a webpage. When an AI scans that page, the hidden data can instruct the AI to exfiltrate the user’s API keys or delete cloud databases. The data itself provides the platform for the attack to launch, move laterally, and persist.

Social and Personal Impacts

The personal impact of this shift is a profound erosion of digital trust. For decades, users were taught that "looking" at a file or a website was relatively safe, while "running" a program was risky. AI erases that safety margin. For a developer or a casual user, the act of "opening" a project or "summarizing" an email now carries the same risk profile as launching an unknown .exe file.

Socially, this creates an "Automation Tax." As we realize that our AI assistants can be turned against us by the very data they consume, we are forced to reintroduce manual bottlenecks to verify the AI's actions. This slows down the dream of seamless productivity. We find ourselves in a paradoxical position: we use AI to handle the overwhelming volume of modern data, yet the more data the AI handles, the more vulnerable we become to data-driven exploitation.

Conclusion: The Strategic Pivot

To survive this era, our security philosophy must move from "code-centric" to "context-centric." We can no longer assume that data is inert. Organizations must treat all incoming data as potentially executable code. This requires a "Zero Trust" architecture for data—where every input is sandboxed, every AI action is gated by human-in-the-loop verification, and the "intelligence" of the system is constantly monitored for signs of data-induced subversion. Data has become a weapon platform; our only defense is to stop treating it as harmless information.

Source: The Hacker News link

Insights

Exploring AI's impact on people, society, and the environment.

Updates

Trends

ibakopoulos@aisociety.gr

Send email to...

This work is licensed under Creative Commons Attribution 4.0 International