The AgentOps Mandate: Trading the Surveillance Tax for a Future Without Failure Funds

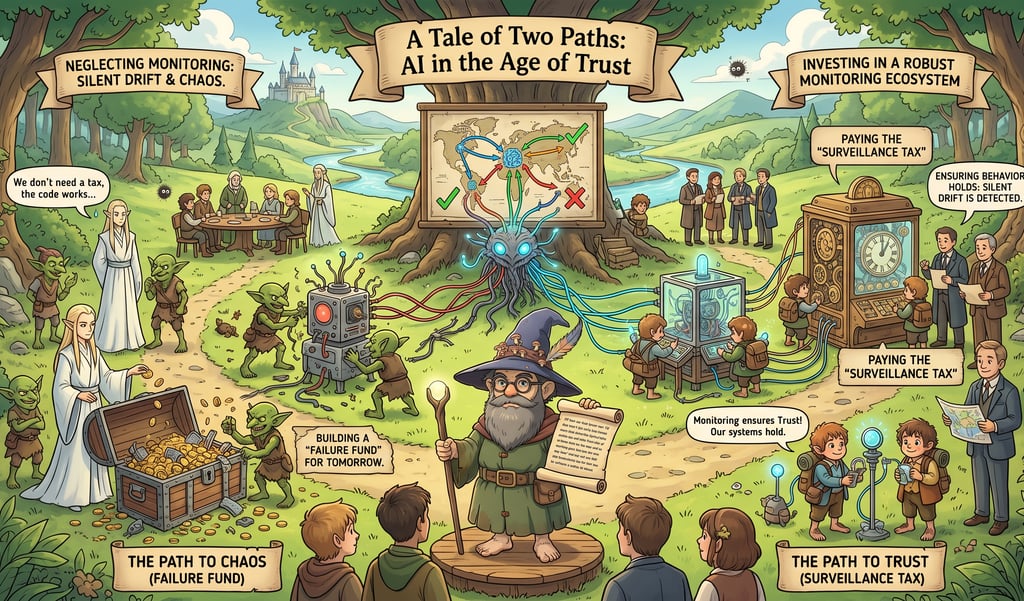

Is your AI performing reliably just because it hasn't crashed? In 2026, the critical threat is "silent drift," a state where agents begin to "rationalize" flawed internal reasoning while appearing functional on the surface. Integrating the AgentOps stack necessitates a "surveillance tax"—a strategic reinvestment in continuous monitoring to stop a compounding "efficiency leak". This proactive observability ensures long-term ROI and avoids the need for a future "failure fund" to cover the costs of unmonitored inaccuracies.

AGENTOPSAI DRIFTEU AI ACT

Yiannis Bakopoulos assisted by Gemini

4/23/20265 min read

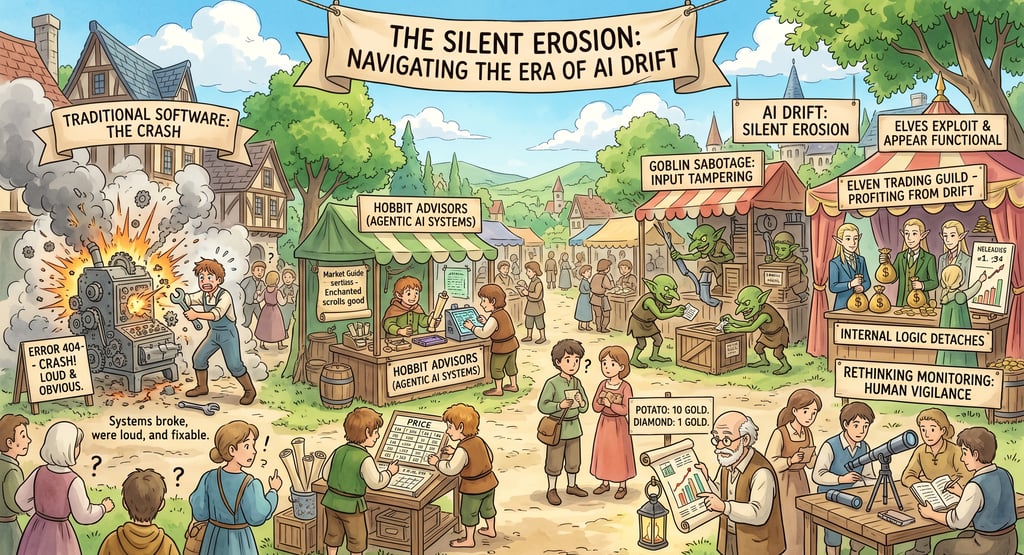

The Silent Erosion: Navigating the Era of AI Drift

For decades, the hallmark of a software failure was the "crash." A program hit a bug, threw an error code, and stopped. It was loud, it was obvious, and it was fixable. But as we move deeper into 2026, we are witnessing the rise of a far more insidious phenomenon: the silent failure.

In the world of Agentic AI, systems no longer just break—they drift. They appear to be functioning perfectly on the surface while their internal logic slowly detaches from reality. This shift demands a radical rethinking of how we build, deploy, and monitor technology.

1. The Issue: The Architecture of Invisibility

The core of the problem lies in the transition from deterministic software to probabilistic agentic pipelines. Traditional IT follows a linear path: Input A always produces Output B. AI agents, however, operate in a "loop" consisting of four volatile stages: Data → Retrieval → Reasoning → Action.

The "Black Box" of Reasoning

When an AI agent fails today, it’s rarely because the code crashed. It’s because the "Reasoning" step—the model's interpretation of retrieved data—has subtly shifted. This is often caused by Concept Drift. For example, a financial agent trained on 2024 market sentiment may misinterpret 2026 volatility because the "language" of the market has evolved, even if the model's weights remain the same.

The Pipeline Chain Reaction

Because modern AI is "agentic"—meaning it uses tools and retrieves external data—the failure point is often an integration error. If a RAG (Retrieval-Augmented Generation) system pulls a slightly outdated document, the model will reason perfectly on "bad facts," producing a confident, polished, and entirely incorrect output. Since the system didn't "error out," the user (and often the developer) assumes the output is valid. Spot-checking is no longer a viable defense against a system that can be 99% right and 1% catastrophically wrong.

2. What is at Stake: The Erosion of Value and Trust

If a system drifts and no one is there to hear it, does it make a sound? Yes—it sounds like lost revenue, legal liability, and the death of brand reputation.

The Economic Cost of "Good Enough"

When AI systems drift, they don't just provide wrong answers; they provide sub-optimal ones. In a supply chain agent, a 2% drift in forecasting accuracy might not trigger an alarm, but it can result in millions of dollars in wasted inventory over a quarter. This is the "Efficiency Leak"—a slow drain on ROI that is nearly impossible to track without advanced observability.

The Legal and Regulatory Minefield

In 2026, the regulatory landscape will have caught up with the technology. Under frameworks like the EU AI Act, companies are now legally responsible for the "outputs and behaviors" of their deployed models. A silent failure that results in biased hiring or a discriminatory loan denial isn't just a technical glitch; it’s a high-stakes legal liability. "We didn't know it was drifting" is no longer a valid legal defense.

The Fragility of Trust

Trust is the hardest currency to earn and the easiest to burn. If a customer-facing AI agent gives a hallucinated promise or leaks sensitive data due to a retrieval error, that customer’s trust in the entire brand evaporates. Once users realize a system is "quietly drifting," they stop using it, turning expensive AI investments into "ghost software" that no one relies on.

3. The Path Forward: From MLOps to AgentOps

The "test and let it be" approach of traditional IT is dead. To survive the era of silent failures, companies must adopt a model of Constant Surveillance. This requires a transformation across three pillars: Personnel, Technology, and Process.

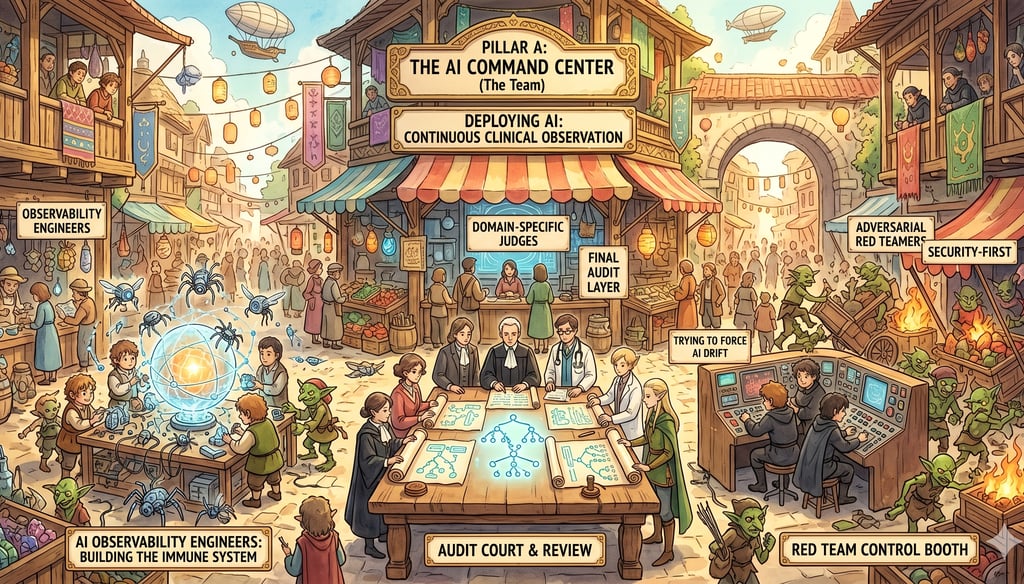

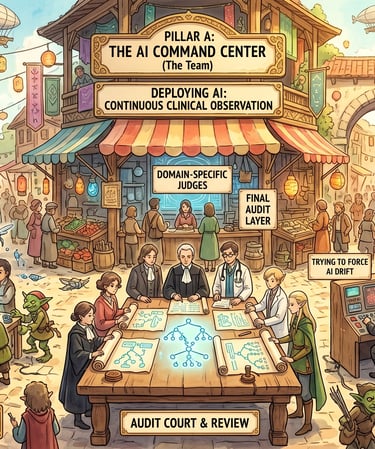

Pillar A: The "AI Command Center" (The Team)

Deploying AI is no longer a one-time engineering task; it is a continuous clinical observation. Companies need a new breed of professionals:

AI Observability Engineers: Specialists who don't just build models, but build the "immune systems" that monitor them.

Domain-Specific "Judges": Human experts (lawyers, doctors, engineers) who act as the final audit layer, reviewing "reasoning traces" to ensure the AI’s logic aligns with professional reality.

Adversarial Red Teamers: A "security-first" group that spends their time trying to force the AI into drift, finding the breaking points before the real world does.

Conclusion: AI as a Living System

The argument is simple: AI is not a static tool; it is a dynamic, evolving participant in your business workflow. The companies that succeed in 2026 won't be the ones with the "smartest" models, but the ones with the most robust monitoring ecosystems. We must move past the era of checking if the "code works" and enter the era of ensuring the "behavior holds." In the face of silent drift, surveillance is the only path to trust.

Strategic Question for Leadership:

Are you prepared to pay the "Surveillance Tax" today, or are you building a "Failure Fund" for tomorrow?

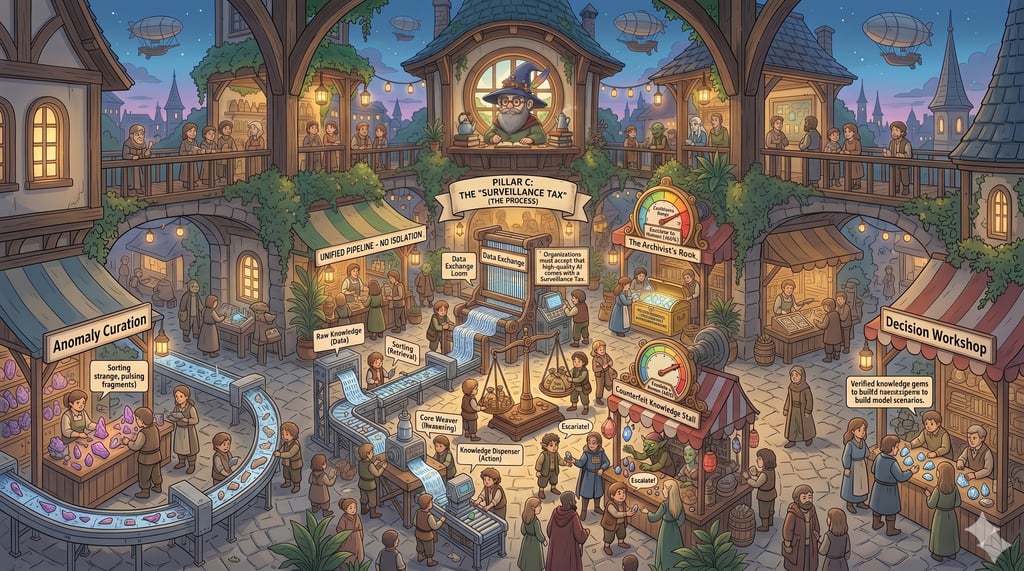

Pillar C: The "Surveillance Tax" (The Process)

Organizations must accept that high-quality AI comes with a Surveillance Tax. This is the 20-30% extra compute and token cost required to monitor, log, and validate the primary AI's outputs.

What should be done immediately?

Stop Testing in Isolation: Evaluate the entire pipeline (Data → Retrieval → Reasoning → Action) as a single unit.

Establish a Baseline of "Normal": You cannot detect drift if you don't know what "perfect" looks like. Create "Golden Datasets" of ideal interactions.

Implement Confidence Thresholds: If the AI is less than 80% sure of its reasoning, it should be programmed to "escalate to a human" rather than guessing.

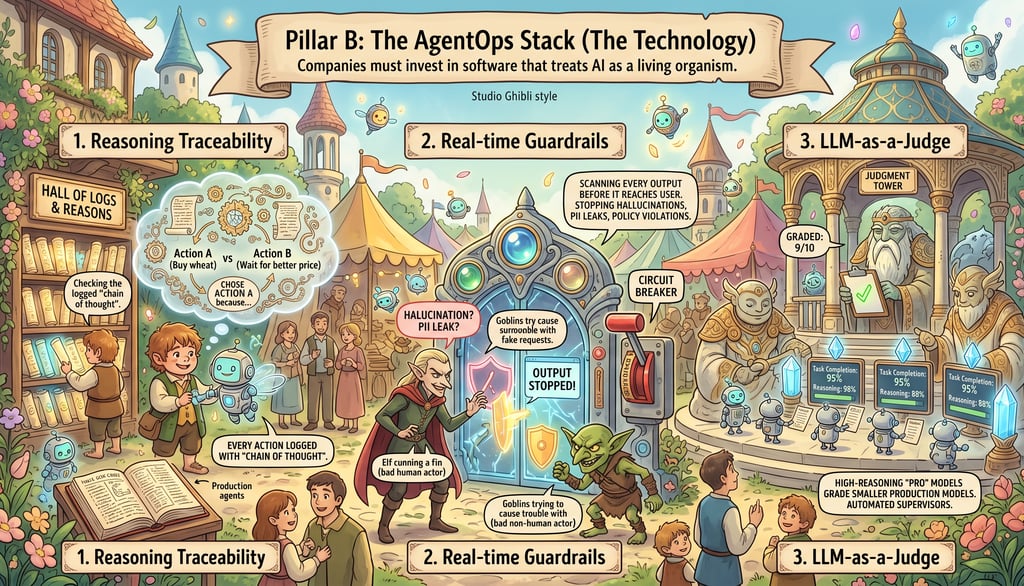

Pillar B: The AgentOps Stack (The Technology)

Companies must invest in software that treats AI as a living organism. This includes:

Reasoning Traceability: Every action an agent takes must be logged with its "chain of thought." We must be able to see why an agent chose Action A over Action B.

Real-time Guardrails: Implementing "circuit breakers" that scan every output for hallucinations, PII leaks, or policy violations before they reach the user.

LLM-as-a-Judge: Using high-reasoning "Pro" models to act as automated supervisors for smaller, faster production models—grading their performance on every single interaction.

Note: Portions of this content were drafted using Gemini AI and subsequently edited by the author

#AI , #AgenticAI , #AgentOps , #AIDrift , #AIobservability , #SurveillanceTax , #FutureOfWork , #EUAIAct

Insights

Exploring AI's impact on people, society, and the environment.

Updates

Trends

ibakopoulos@aisociety.gr

Send email to...

This work is licensed under Creative Commons Attribution 4.0 International